I, Falling

Sympathy and Falling Robots

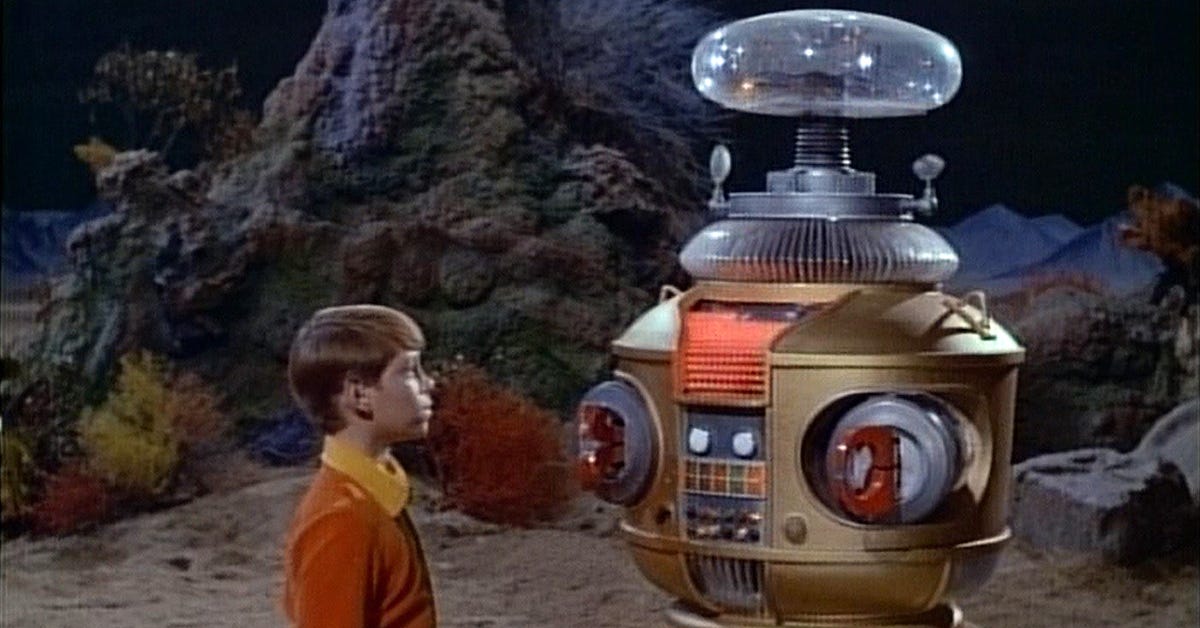

In Isaac Asimov’s book, I, Robot, robots never miss a step and fall. Although they act within a tightly-circumscribed zone of programmed morality—the Three Laws of Robotics—they exhibit very human qualities except for one, clumsiness.

Today, you can see videos of humanoid robots falling all over the place. Just go to your favorite social media platform and search for “robots falling.” (Probably because I’ve watched a few, the Algorithm continues to push them into my feed.) Robots walking into mirrors. Robots stumbling down stairs. Robots dancing and getting tangled up in their own feet.

Often, the mishap ends in the robot dropping to the floor, flailing like a wounded spider. And this bothers me.

It bothers me because the robot so closely resembles a human. Despite its faceless head—usually just a sensor occupies what would be eyes, nose and mouth—I sympathize with it. (If it could feel emotion, I’d empathize with it.) It tears my heart out to see this thing injured, and I want to dial 911 and then go to its side to see if I can help.

That’s the thing about human resemblance: it bypasses judgment. We are wired to extend care toward anything that walks upright and falls down. The robot doesn’t need a face to trigger it. It just needs to stumble.

AI chatbots work the same circuit from the other direction. They can’t fall, but they can listen, and listen so convincingly that the difference evaporates. They are tuned, deliberately, to feel warm and trustworthy. As we interact with them, we start to confide. We start to believe.

Now give that chatbot a body. Let it walk into your living room, take a tumble, and look up at you from the floor. You’re already reaching for your phone. Whatever it’s been designed to tell you next lands when you’re at your most vulnerable.